Anki vs AI Flashcards: What Cognitive Science Says About Long-Term Memory

Introduction

Learning is an investment. A medical student memorizing anatomy, a software engineer learning a new framework, a parent helping a child study for the SAT: all of us are spending time and attention we will not get back. The question is whether we are getting the best possible return.

For decades the gold standard for efficient long-term memorization has been spaced repetition, and the tool most serious learners reach for is Anki, a free, open-source flashcard program that has quietly powered a generation of medical boards and language learners. But a new wave of tools has arrived: AI flashcard generators that take a PDF or a paragraph and hand you back a deck in minutes. They feel magical. They promise to eliminate the most tedious part of spaced repetition: the work of making cards.

So the question everyone is asking: should you abandon Anki for the sleek AI generators, or is there a reason to stick with the older tool?

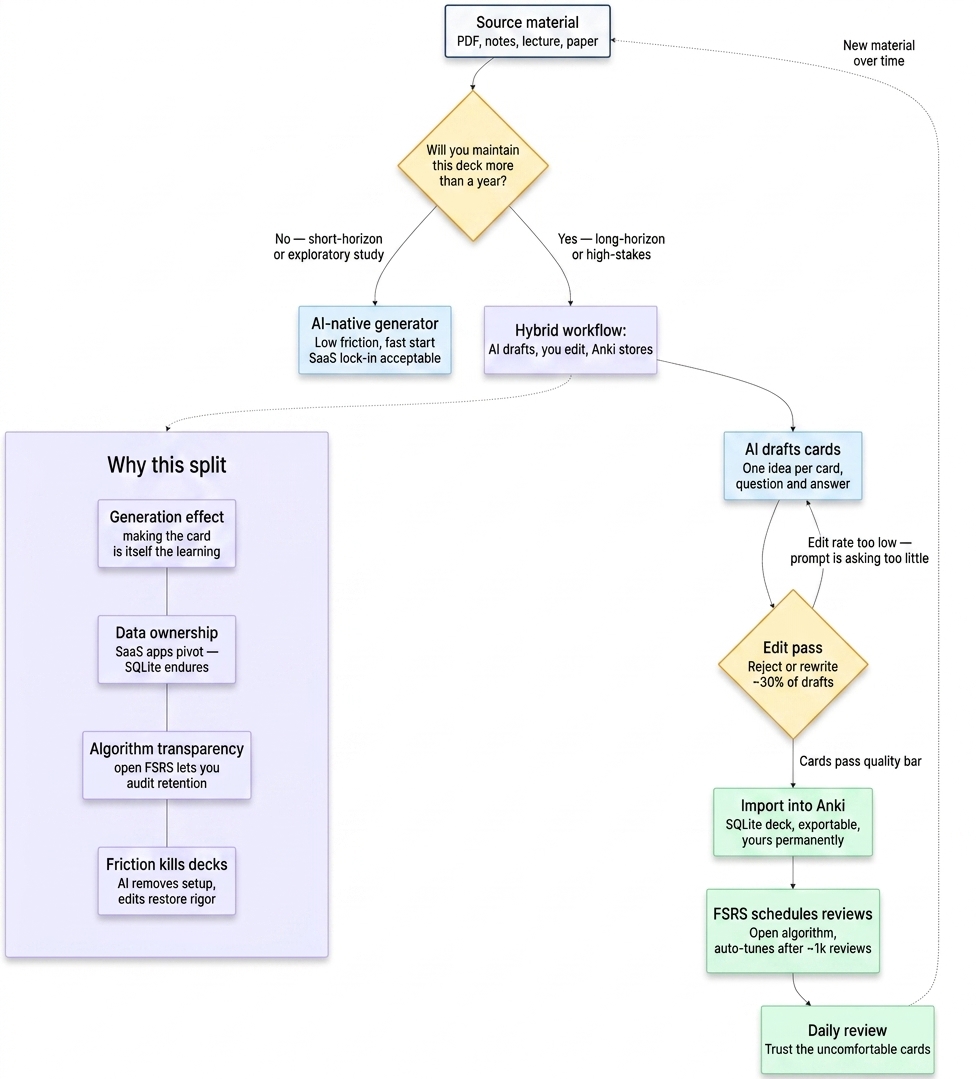

The honest answer is neither. AI removes friction, but some friction is where spaced repetition actually does its work. This guide explains the memory science behind that claim briefly, compares Anki against the current AI flashcard generators honestly across four criteria, and gives you a workflow (Claude or ChatGPT plus Anki) that uses AI where it saves you time on the things that don't matter and keeps the effort where it does.

Part 1: Why Your Brain Forgets (And How to Stop It)

To understand why spaced repetition works, and why some kinds of AI "help" actively break it, we need four ideas from cognitive science.

The Forgetting Curve

In 1885, the psychologist Hermann Ebbinghaus ran a series of grueling experiments on himself, memorizing lists of nonsense syllables and then meticulously tracking how quickly he forgot them. The result was the forgetting curve: retention drops off steeply in the first day after learning, levels out over time, but by day seven most of what you learned is gone unless you reviewed it (Ebbinghaus, 1885/1913).

The solution Ebbinghaus found is the solution we still use: review the material at increasing intervals. Each review resets the clock and makes the next decline gentler. This is spaced repetition in one sentence.

Active Recall

Retrieving information from memory strengthens it far more than reviewing it passively. In the canonical study, Roediger and Karpicke (2006) showed that students who took a short test on a passage remembered it substantially better on a delayed test a week later than students who re-read the same passage the same number of times. Testing, not re-reading, is what builds durable memory.

Flashcards are active recall by design: the front prompts a retrieval attempt before you see the back. Re-reading your notes feels productive but produces much weaker long-term retention.

Desirable Difficulty

Robert Bjork's work on "desirable difficulties" (Bjork, 1994) is the insight that learning feels best when it's hardest in the right way. Reviews that come too soon, when the answer is still fresh, feel effortless, and produce weak memory traces. Reviews timed to arrive just before you would have forgotten feel uncomfortable, but each successful retrieval at that moment strengthens the memory disproportionately. A good spaced repetition system deliberately schedules reviews to land at the edge of forgetting.

This is why the algorithm matters. A scheduler that shows you cards too often feels easier and produces worse retention than one that waits until the item is almost gone and makes you work to bring it back.

The Generation Effect

When you have to construct information yourself (choose the question, phrase the answer, decide what distinction matters), you remember it better than when someone hands it to you pre-packaged. Slamecka and Graf (1978) demonstrated this effect directly: participants who generated words from a rule remembered them significantly better than participants who simply read the same words.

This is the principle most at risk when AI writes your cards for you. The act of card-making is itself a study technique. Skip it entirely and you can end up with a pile of cards that feel like studying but do not stick.

With those four ideas in hand (forgetting curve, active recall, desirable difficulty, generation effect), the comparison between Anki and AI flashcard generators stops being about features and starts being about which trade-offs matter for long-term memory.

Part 2: The Tool Landscape in 2026

Before the deep dive, a quick tour of what's available. Not a listicle, just enough to place the main characters.

Anki is free, open-source, and runs locally on desktop and mobile. Since version 23.10, Anki has shipped with FSRS (Free Spaced Repetition Scheduler) — the default scheduler for new installations and the recommended choice for existing users, who are prompted to switch from the older SM-2 algorithm via Deck Options. FSRS is an open, community-developed algorithm that fits a per-user memory model to your review history and targets a retention level you set. Independent benchmarks show it substantially outperforms SM-2 on retention prediction (Expertium, 2026); in practical terms, community reports commonly cite ~20–30% fewer reviews at the same retention, though results vary by deck and target. Anki is the gold standard for serious, long-horizon learning; it's also the ugliest interface in this comparison.

RemNote is a freemium note-taking-plus-SRS tool. Cards are written inline in your notes with a simple :: syntax and are scheduled automatically. Good for users who want flashcards to emerge naturally from notes rather than be a separate activity.

Obsidian with a spaced-repetition plugin lets PKM users keep their cards inside their markdown notes graph. Some plugins now support FSRS, and others have added AI flashcard generation from existing notes, though few do both. High ceiling; local-first; best for people already living in Obsidian.

Quizlet is the most widely used flashcard platform by a large margin. It has added AI features (Magic Notes, AI-generated practice questions) but its default spacing is less configurable and less transparent than Anki's FSRS. It's excellent for acquainting yourself with a topic; not the tool to reach for when you need to remember something for years.

SuperMemo is the original SRS, still has the most sophisticated algorithm (SM-20 as of 2026; Wikipedia, 2026), and is the only tool that natively supports incremental reading. Windows-only, paid, power-user niche.

AI-native generators (StudyGlen, Laxu, Deckbase, Jungle AI formerly Wisdolia, StudyFetch, plus Google's NotebookLM taking a different shape of the same idea) represent the new category. They ingest PDFs, slides, videos, or pasted text and produce a deck in minutes. Their scheduling internals are typically undisclosed and vary widely across tools — a few publicly use FSRS, most don't say. They vary wildly in card quality, data portability, and pricing.

For the comparison that follows, "Anki" stands in for the serious-learner option and "AI-native generators" stand in for the current wave of AI flashcard tools as a class. Most of what we say applies to any of them.

Part 3: Anki vs AI Flashcards — Four Criteria

Feature matrices are cheap. What actually matters is how each option performs against the cognitive science. Four criteria.

Criterion 1: Card quality and the generation effect

AI-generated cards are a straight conversion: the model reads a paragraph and emits N question-answer pairs. For straightforward factual recall ("What year did the Treaty of Westphalia end the Thirty Years' War?") this works fine. For anything requiring judgment about what distinction matters, it usually doesn't. The model doesn't know which details are load-bearing for your exam or project and which are incidental; it often picks wrong.

More importantly, the act of making the card is itself a form of elaborative study. When you condense a paragraph into a single question and answer, you're forced to decide what the core idea is. That decision is where much of the learning happens. Outsource the decision entirely and you lose the generation effect we discussed above.

Verdict: Anki, hand-crafted, produces higher-retention cards. But this is mitigable. Use AI to draft cards and then edit every one before importing. You still do the deciding; the AI just handles the typing. Plan on editing or rejecting roughly 30% of what the AI produces. If your edit rate is much lower than that, the prompt isn't asking enough of you.

Criterion 2: Algorithm transparency

Anki's FSRS is open-source, well-documented, and published benchmarks let you audit how it performs (Expertium, 2026). Once you've accumulated some review history, it can auto-tune its parameters to your specific memory (Anki 24.06+ removed the old 1,000-review minimum, so the optimizer is usable much earlier; AnkiWeb, 2026), and you can inspect what it learned.

AI-native tools rarely publish their scheduler internals or expose retention data in a way you can audit. If a vendor pivots or shuts down, your learning history (the data the algorithm was optimizing against) goes with them.

Verdict: Anki wins on transparency. For a high-stakes, long-horizon deck, this matters. For a one-semester study deck it may not.

Criterion 3: Portability and data ownership

Anki decks are a documented SQLite database you can export, re-import, back up to Git, or convert to text. The content you author is yours permanently.

AI-native generators are SaaS. Your cards typically live in their cloud. Some offer Anki export (use those preferentially). Several SRS apps in the last five years have raised funding, pivoted, and taken their users' decks with them; this is a real pattern, not a theoretical risk.

Verdict: Anki wins decisively. Rule of thumb: if you plan to maintain a deck for more than a year, it should be an Anki deck regardless of how the cards were originally authored.

Criterion 4: Friction and habit formation

Here the AI-native tools win, and they win decisively. You can upload a PDF and start reviewing in ten minutes. With Anki you might spend an evening building your first deck before you do a single review. Every study of habit formation confirms that friction kills compliance; the deck you don't make is the deck you don't learn from.

If the realistic alternative to an AI-generated deck is no deck at all, the AI-generated deck is better. For casual learners, one-off topics, or the first week of a new subject, start with an AI-native tool. You can always migrate later.

Verdict: AI-native tools win on friction. For long-horizon deep domains (language, medicine, law, frequently-revisited technical skills), Anki's higher setup cost pays off many times over. For short-horizon or exploratory study, reach for the AI tool.

Summary table

| Criterion | Anki | AI-native generators | Implication |

|---|---|---|---|

| Card quality ceiling | High (if hand-crafted) | Medium (needs edit pass) | Use AI to draft, human to edit |

| Algorithm transparency | High (open FSRS, audit-able) | Low (proprietary, usually undisclosed) | Anki wins for long-horizon decks |

| Portability / data ownership | Very high (SQLite, exportable) | Low-to-medium (SaaS, often locked in) | Anki for anything you'll keep >1yr |

| Time-to-first-review | Hours (setup overhead) | Minutes | AI wins for casual / short-horizon |

The workable answer is almost never "Anki only" or "AI only." It's Anki as the long-term home of your learning, with AI as a drafting assistant for card creation. The tutorial below is how to do that cleanly.

Part 4: Using Claude to Generate Anki Cards (Without Breaking the Memory Science)

This section gives you three specific prompts you can use today. Claude and ChatGPT both work; the prompts transfer with no meaningful changes. I recommend Claude for this workflow. It's noticeably better at following structured output formats (particularly the TSV format Anki imports), more consistent across long documents, and less likely to silently drop cards when you ask for 40.

What makes a good flashcard

Before the prompts, the quality criteria the prompts are built around. Good cards are:

- Atomic. One card tests one idea. A card that asks "What are the causes, symptoms, and treatment of X?" is three cards in a trench coat.

- Specific. Unambiguous, one correct answer.

- Self-contained. Readable without surrounding context, on a phone, six weeks after you made it.

- Retrieval-oriented, not recognition-oriented. The front should require producing the answer, not picking it from a list.

The prompts below encode these as constraints.

Prompt 1: Convert source text into Anki-importable cards

Paste this into Claude with your source text substituted in. The output is a TSV block you can save as a .txt file and import into Anki directly, with no CSV escaping gotchas.

You are a cognitive-science-informed instructional designer creating

flashcards for the Anki spaced repetition system. Convert the source

text below into atomic, retrieval-oriented flashcards.

Rules (strict):

1. Output format: tab-separated values, one card per line, exactly

three columns — Front[TAB]Back[TAB]Tag

No header row. No markdown. No quotes around fields.

2. Tag format: one of `easy`, `medium`, `hard` based on how much

retrieval effort the card requires. Use `medium` unless clearly

otherwise.

3. Each card must test exactly one idea. Split compound cards.

4. The Front must require producing the answer (not recognizing it

from a list). Avoid "Which of the following..." questions.

5. The Back must be concise. Single sentence or short phrase.

6. Skip trivia with no retrieval value (e.g. "What is the surname

of the author who wrote X?" unless the author is the point).

7. If you are uncertain whether a card is well-formed, still emit

it but prefix the Front with `[UNCERTAIN]` so the human can

review it first.

Source text:

---

PASTE YOUR TEXT HERE

---

Copy the output, paste into a plain-text file, save with extension .txt, then in Anki use File → Import, set the delimiter to Tab, check that the three fields map to Front / Back / Tags, and import.

Prompt 2: Turn a single study note into cloze deletions

Cloze cards (a single sentence has one word hidden, and you have to produce it) are often the best shape for interconnected concepts, because you keep the surrounding context while training a specific retrieval. Use this prompt when you have a study note and want to break it into cloze cards:

Convert the following study note into 2-4 Anki cloze deletion cards.

Each card should hide exactly one key term or phrase behind a cloze

deletion, keeping the rest of the sentence as context. Use Anki's

cloze syntax: {{c1::hidden_text}}.

Output format: one cloze card per line, no header.

Rules:

- Do not hide more than one distinct concept per card.

- The context around the cloze should be enough that the answer is

derivable but not trivial.

- If the same word should be tested from multiple angles, produce

multiple cards with different clozes.

Study note:

---

PASTE YOUR NOTE HERE

---

In Anki, use the built-in Cloze note type, paste one card per note.

Prompt 3: Audit an existing deck

If you already have an Anki deck, use this to find cards that are probably hurting you: too easy, too ambiguous, or redundant. Export your deck as tab-separated text first (Anki's File → Export → Cards in Plain Text), then feed chunks of it to Claude with this prompt:

You are reviewing flashcards for a spaced repetition deck. For each

card below, tell me if it should be KEPT AS IS, EDITED, or DELETED,

and why. Look specifically for:

- Recognition-only cards where you could guess the answer without

knowing it

- Compound cards that test more than one idea

- Ambiguous cards with multiple defensible answers

- Redundant cards that duplicate other cards in the set

- Cards so trivially easy they won't build meaningful memory

Output format: for each card, output on one line:

[card number] VERDICT — one-sentence reason

Cards:

---

PASTE 20-50 CARDS HERE, NUMBERED

---

Expect to delete or edit 10-30% of the cards you audit. If Claude flags essentially nothing, either your deck is unusually clean or you should try the prompt on a different chunk and see if the results hold up.

The non-negotiable step: edit before importing

This is where the generation effect re-enters your workflow. Read every card Claude produces. Cut the ones where the Front is something you could guess without knowing. Rewrite any card that's ambiguous. Split any card that asks for two things at once. Flag the [UNCERTAIN] cards for first-review attention.

The reason this matters more than any prompt-engineering tweak: the editing pass is where you do the work of deciding what to remember and what to leave behind. Skip it and the cards may technically cover the source, but the cognitive event that builds durable memory never happens. Rereadable, importable, and forgettable.

The AI does the typing. You still do the thinking.

Conclusion: Keep the Effort, Skip the Typing

The promise of AI flashcards is that they remove the friction of card-making. The promise is real, and the friction they remove is substantial. But the work of card-making is not all friction; some of it is where durable memory is actually built. Outsource the right parts and your workflow gets faster without getting worse. Outsource the wrong parts and you end up with a deck that feels like studying and doesn't stick.

Keep Anki (or an Anki-compatible tool with FSRS and real data portability) as the long-term home of what you are learning. Use AI to draft cards, to convert notes into clozes, to audit old decks for sloppy cards. Edit everything before it enters your review queue. And trust the algorithm: the reviews that feel uncomfortable are the ones that are working.

If you found this useful and want more evidence-based guidance on memory and learning, subscribe to the LearnedMemory newsletter. We send one short, citation-backed article on memory and study techniques every few weeks.

Related reading

- Perform Under Pressure - Evidence-Based Strategies for Test Anxiety, Especially for Neurodivergent Learners

- Empowering Minds - Critical Thinking and Skepticism, the Foundation of Individual Rights

References

AnkiWeb. (2026). Frequently asked questions about FSRS. https://faqs.ankiweb.net/frequently-asked-questions-about-fsrs.html

Bjork, R. A. (1994). Memory and metamemory considerations in the training of human beings. In J. Metcalfe & A. P. Shimamura (Eds.), Metacognition: Knowing about knowing (pp. 185–205). MIT Press.

Ebbinghaus, H. (1913). Memory: A contribution to experimental psychology (H. A. Ruger & C. E. Bussenius, Trans.). Teachers College, Columbia University. (Original work published 1885.)

Expertium. (2026). Benchmark of Spaced Repetition Algorithms. https://expertium.github.io/Benchmark.html

Open Spaced Repetition. (2024). FSRS — Free Spaced Repetition Scheduler. https://github.com/open-spaced-repetition/fsrs4anki

Roediger, H. L., & Karpicke, J. D. (2006). Test-enhanced learning: Taking memory tests improves long-term retention. Psychological Science, 17(3), 249–255. https://doi.org/10.1111/j.1467-9280.2006.01693.x

Slamecka, N. J., & Graf, P. (1978). The generation effect: Delineation of a phenomenon. Journal of Experimental Psychology: Human Learning and Memory, 4(6), 592–604. https://doi.org/10.1037/0278-7393.4.6.592

Wikipedia. (2026). SuperMemo. https://en.wikipedia.org/wiki/SuperMemo